Overview

Internal R&D exploring adaptive robotics and real-time human-robot interaction through AI-driven perception systems.

Objective

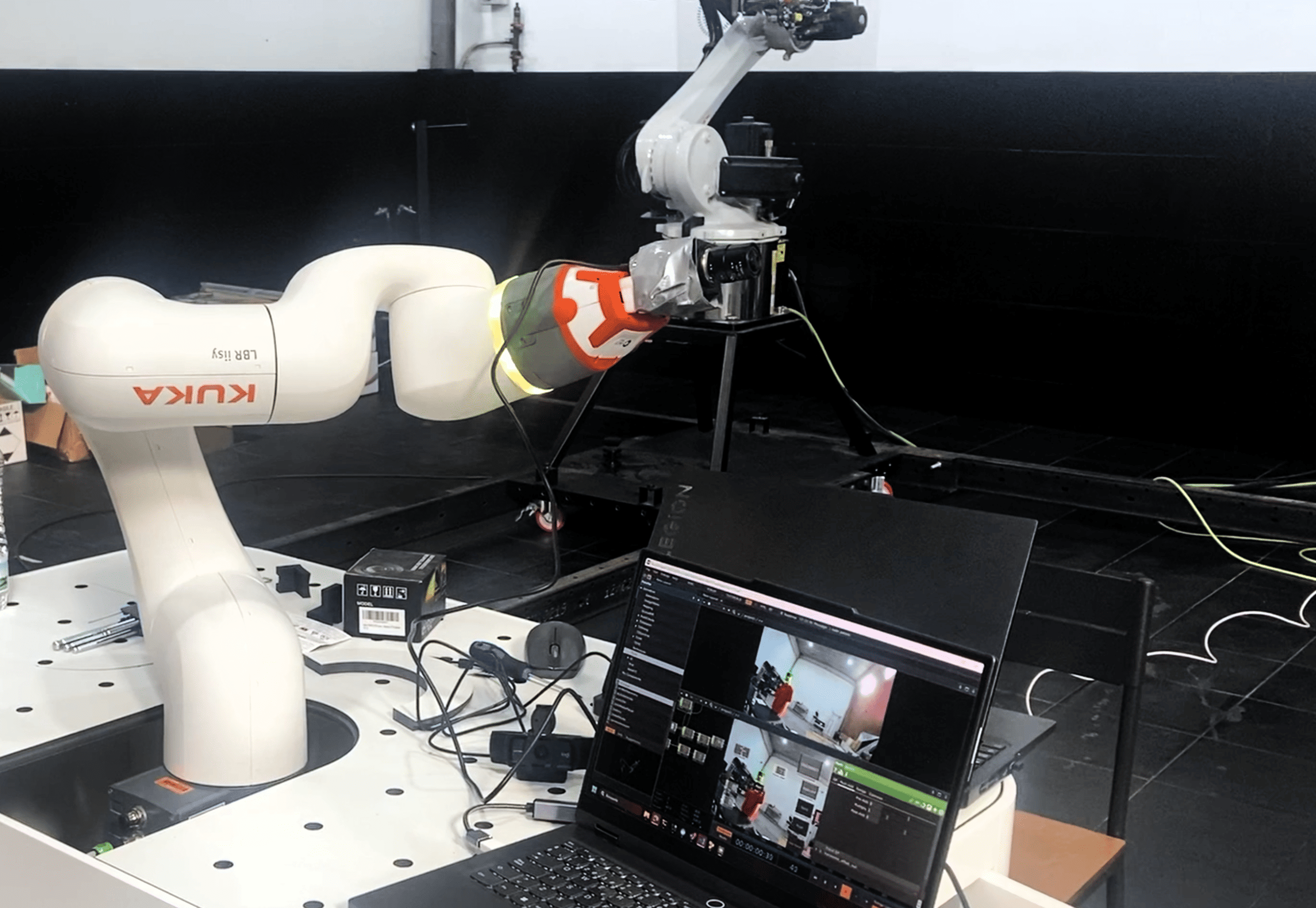

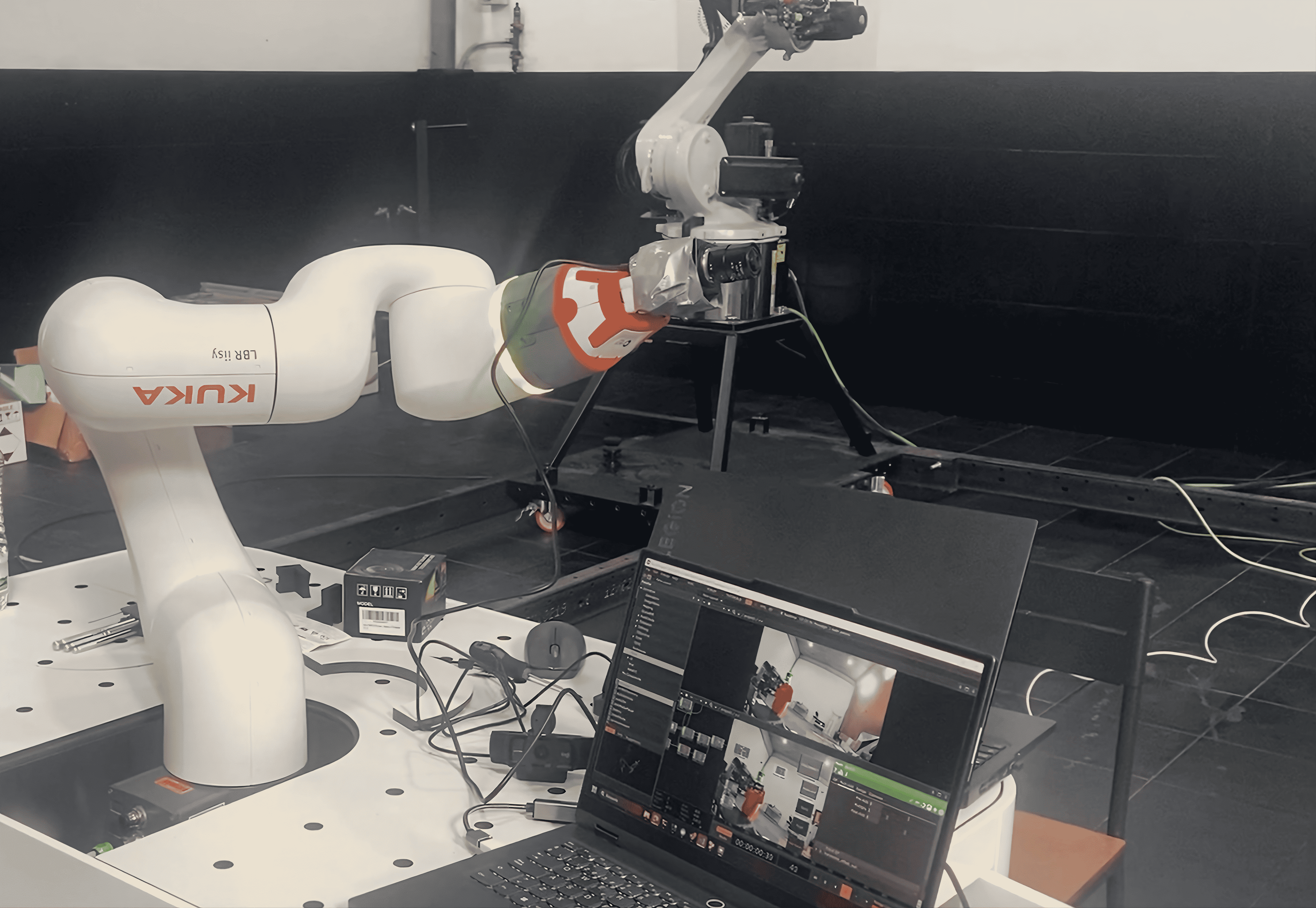

Development of a real-time human tracking system using a KUKA iisy robot, combining camera vision, AI-based face tracking and custom motion control.

System

Integration of visual perception, tracking algorithms, custom inverse kinematics and interactive software into a unified real-time control architecture.

Execution

The system detects and tracks human presence through camera input, processes data using AI models and generates responsive robot motion dynamically, without relying on predefined sequences.

Technology

Result

A perception-driven robotic system capable of sensing, interpreting and responding to human presence in real time, advancing BAÜP’s research into adaptive, non-linear robotic behavior.